13 February 2023 | St Albans, UK [Vanesa Pizzuto]

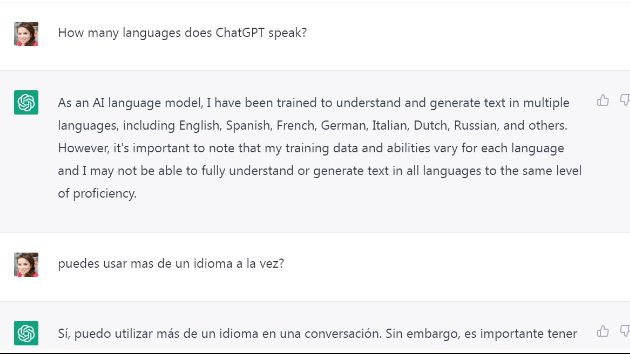

During a recent training on crisis management our instructor – a dyed-in-the-wool Artificial Intelligence (AI) enthusiast – spent an inordinate amount of time praising ChatGPT. Not wanting to admit I had no idea what he was referring to, I sneakily looked it up on my phone. ChatGPT, or Chat Generative Pre-trained Transformer, is basically a chatbot; a software used for online chat conversations, powered by AI and designed by OpenAI, a research laboratory in California.

You know those often-frustrating automated customer service chats? They are operated by older chatbots; basically, by the great-grandparents of ChatGPT. Now picture this: rather than immense frustration, the chatbot provides you with strikingly human-like answers to ANY prompt… That is ChatGPT.

But there is more! ChatGPT can write computer programmes and compose music. It can even write essays and poetry. Demonstrating both its simplicity to use and powerful outcome, our instructor gave us a demo. All he did was connect ChatGPT to Google Forms and asked it to write the index for a paper on “Crisis Management” and some paragraphs too. After waiting just a couple of a seconds, voilà! In front of us was a remarkably coherent and accurate text.

After I picked up my jaw from the floor, I asked what any author worth his/her soul would have ever asked, “What about copyright?” After all, ChatGPT was trained using massive amounts of data from the internet (570 gigabytes of text, to be precise) some of which is copyrighted material. It turns out this is somewhat a grey area. At present, it is unclear if ChatGPT alters original works sufficiently to avoid copyright infringements (and if it does, whether this constitutes a true creation or merely “copyright laundering”). What is clear is that OpenAI is not liable for damages.¹ So, if users are faced with a lawsuit, they are pretty much on their own.

Copyright implications aside, one thing is for sure: with Google releasing its own chatbot, Brad, AI has become, now more than ever, a part of our daily lives.

Some implications for education

Pretty much everyone in my family is a teacher. So, unsurprisingly, my mind went straight to implications for education once the training was over. Picking up the phone I called my twin sister, Inés, who is a headteacher at a bilingual school in Argentina. We talked for a while about how AI will force educational institutions to rethink plagiarism. But soon we were navigating deeper waters, discussing assessment criteria and the core goals of education. “I think AI developments will force us to focus even more on emotional literacy and critical thinking,” my sister shared. “In a time when a computer can give you an answer in a nano second, our emphasis must be on discernment, not content regurgitation.”

Besides the potential for academic dishonesty², one of the fears regarding ChatGPT-like technology, is that it will be used to generate massive amounts of fake news. “Instead of one false report about a presumably stolen election, someone could quickly generate lots of unique reports, and distribute them on social media to make it seem like different people are writing those reports,” commented Ulises Mejias, a Communication professor at OSWEGO University, and co-author of The Costs of Connection.³

And for the Church?

As a Church, we have often taken the simplistic approach of demonising new technologies when faced with complex scenarios such as this. Sadly, I believe this is a terrible mistake. Not only is such technology not going to disappear (no matter how deep we bury our heads in the sand), but also, the longer we delay engagement, the more vulnerable we are to potential abuses and less able to impact society for good. After all, unless the salt mixes with the food, it cannot give it flavour!

“Demonising technology is not the solution, we need to empower students to sift through the massive amounts of data they are exposed to every day,” Inés shared. If they are to enjoy the benefits of new technologies, and successfully avoid their pitfalls, “students should not complete formal education without becoming familiar with concepts like algorithmic bias, AI ethics, and misinformation. We need to furnish them with practical tools. For example, we should all know how to do a reverse image search to check if the picture that accompanies a post is original or not.”

Compassionate AI

Compassion is not a word often associated with AI. But I hope one day it will be! At least it will be if, rather than demonising technology and burying our heads in the sand, we start doing something more useful like teaching AI literacy and ethics. We can ensure that AI developments like ChatGPT significantly improve our lives and our society, if we do not abdicate our rights as citizens. If we are willing to write letters to politicians and educators alike. If we push policies that ensure minority groups are considered when data is used to train algorithms. If we update copyright laws. If we demand that compassion and safety are built in by design, from the early stages of AI developments. For the record, compassionate AI is not only possible, as initiatives like Deep Empathy and Cogito show, but also absolutely necessary. Because as AI scientist and author Armit Ray wisely points out, “The challenge of future artificial intelligence is to convert the modern data driven society to value driven society.”

One last comment, I asked ChatGPT how we could ensure AI is used for the greater good of humanity. This was its answer:

Ensuring that AI is used for the greater good of humanity requires a combination of technical, legal, and ethical approaches. Here are some steps that can help:

Developing ethical guidelines: Ethical guidelines should be established to guide the development and deployment of AI systems, ensuring that they are aligned with human values and respect human rights. These guidelines should address issues such as privacy, accountability, transparency, and fairness.

Ensuring transparency: AI systems should be transparent and explainable, so that their decisions can be understood and evaluated by those affected by them. This will help build trust in the technology and ensure that it is used in a responsible and ethical manner.

Promoting diversity and inclusivity: AI systems should be developed and deployed in a manner that is inclusive and reflects the diversity of society. This will help ensure that the technology does not perpetuate existing biases and discrimination.

Establishing legal and regulatory frameworks: Governments should establish legal and regulatory frameworks that govern the use of AI and protect the rights and interests of citizens. These frameworks should hold AI systems and their developers accountable for their actions and ensure that they are used in a responsible manner.

Encouraging public participation and dialogue: There should be ongoing public engagement and dialogue about the development and deployment of AI, to ensure that the technology is used in a manner that is in the public interest.

By taking these steps, we can ensure that AI is used for the greater good of humanity and that its benefits are shared by all members of society.

¹Their liability is capped at 100 USA dollars, or the amount paid for the service in the previous 12 months [Source: Will ChatGPT Pass its Probation? Stephenson Harwood Legal].

² According to professor John Villasenor, ChatGPT could improve education rather than threaten it. He suggests teachers should incorporate its use and teach students about its limitations. “Writing a good essay from scratch requires careful, often painstaking, thought about organization, flow and communication. Learning to write without AI does indeed promote focused, disciplined thinking. But learning to successfully combine unassisted and AI-assisted writing to create truly good essays also requires these qualities.” [Source: How ChatGPT Can Improve Education, Not Threaten it, Scientific American].

³ [Source: Rise of AI chatbot technology has implications inside, beyond classrooms, OSWEGO State University of New York].

[Photos: Pexels Pictures]